‘Clarity will be the new currency’ – what leaders in Singapore learned from a year of AI

By 2026, AI will no longer be judged by how impressive it sounds, but by how reliably it helps leaders make the right decisions. Across industries, founders and operators agree: AI has dramatically accelerated decision-making – but it has also raised the cost of being wrong. This was echoed at a recent roundtable, Wizly Connections, where leaders across communications and product agreed that provenance, expert review and audit trails are now operating requirements.

In this new landscape, trust, verification, and clarity are becoming the real competitive advantages.

Executive digest

Leaders are leaning on AI for speed and breadth, using it to scan, summarise, and orient faster than ever before. But as AI becomes ubiquitous, confidence alone is no longer persuasive. Human expertise is re-emerging as a critical verification layer, while authenticity, provenance, and decision clarity are becoming strategic assets. The winners won’t be the teams with the most AI, but the ones that know when to slow down, verify, and compress what truly matters.

What’s changed in decision-making

Speed-first, reflect-later.

Teams are increasingly outsourcing the “first understanding” of a problem to AI – drafting strategies, summarising markets, outlining options in seconds. The risk: mistaking speed for insight. Roundtable participants stressed re-inserting reflection latency for high-stakes calls to avoid shallow or derivative decisions.

Multi-model doesn’t eliminate errors.

Using multiple LLMs to cross-check outputs reduces risk, but it doesn’t solve the core problem. Models can converge on the same confident falsehoods, especially when trained on similar data. Verification must include primary sources and domain experts – especially in high-stakes contexts.

Prompt discipline is rising.

The bottleneck has shifted from retrieval to relevance. Leaders now demand concise decision packets – “the three things that matter” with options, trade-offs and cited evidence – over 10-page dumps. Prompting is becoming an operational discipline.

Trust, authenticity, and the verification layer

Authenticity is scarce.

In a world of AI-generated everything, default scepticism is rising: “Is that real?” is now the baseline reaction to AI-mediated content. Edelman’s 2025 Trust Barometer reports widening trust gaps and declining confidence in information, pushing brands to show provenance and accountability. Gartner forecasts AI TRiSM frameworks will table stakes by 2026, making source control, model lineage, and monitoring standard practice. Disclosure matters: when organisations openly state AI use, trust improves if quality remains high.

Experts validate reality.

AI accelerates exploration; experts validate truth. IBM’s 2025 AI Governance Pulse findings note that organisations with defined review gates and auditability report fewer high-impact AI errors and faster incident resolution. Treat wrong outputs as defects, not quirks, and require expert sign-off in red-flag domains (legal, medical, financial, safety, reputational).

Countering confidence bias.

Fluent AI can persuade even when wrong. Leaders are training teams to demand “confidence-to-evidence” mapping – claims tied to sources, assumptions, and verifiable facts – and to use structured counterarguments before decisions.

Where AI delivers real value

Efficiency.

AI excels at automating low-judgement, repetitive work – reports reports, meeting recaps, formatting, and basic analysis – freeing humans to focus on higher-value tasks. In practice, enterprises adopting workflow redesign around AI report faster speed‑to‑value than those chasing tool count.

Gut-checks.

Many operators use AI as an orientation tool: a fast TLDR that informs initial thinking without replacing judgment.

Creative scaffolding.

AI is powerful for rapid structuring and concepting, but founders consistently stress that humans must finish the work – bringing taste, context, and standards that models still lack. “AI for structure, humans for taste” is becoming the operating principle.

Where AI fails (and why it matters)

Errors, not “hallucinations.”

Soft language obscures real risk. Wrong outputs are defects, not quirks, and can have serious operational consequences if left unchecked. Roundtable anecdotes included a confidently cited but non‑existent product, reinforcing the need for primary source checks.

Confidence bias.

Fluent language persuades, even when it’s wrong. Teams are increasingly training themselves to demand explicit evidence-to-claim mapping rather than trusting tone or coherence.

Apprenticeship risk.

Automation erases “learning-by-doing, threatening talent pipelines. Founders warn that organisations must redesign training with supervised practice, simulations, and intentional “manual mode” experiences.

Voice flattening.

AI writing patterns erode individuality. Many leaders now insist on “write first, AI edit” to preserve authentic voice, especially for public-facing work.

Agents: hype versus real value

Despite the hype, most operators report limited day-to-day impact from AI agents so far. Today’s value is largely concentrated in lightweight productivity, such as summaries or coordination. Outbound agentic behaviour risks uncanny mistrust if it blocks human access or feels deceptive. Stronger use cases: bookings, training simulations and customer support, with clear quality controls and human escalation.

Industry lens: Oil & Gas pragmatism

In sectors like oil and gas, pragmatism dominates. Data is sparse, downtime is expensive, and perfection isn’t required. An AI system that’s 80–85% accurate can still deliver massive value if it reduces outages or flags the riskiest assets. Operators favour small, vertical models and retrieval-augmented systems over general-purpose LLMs, using AI to prioritise the hardest 15–20% of problems so humans can focus where judgment matters most.

Wizly's Predictions for 2026

Clarity becomes a competitive advantage. In an era of infinite information, leaders who compress relevance will win.

Consolidation and specialisation. Fewer tools, better integrated by workflow; bundles will replace fragmented stacks.

“Lazy AI” gets punished. Disclosure, verification, and high standards will build trust; shortcuts won’t.

A generational evaluation gap emerges. Organisations will need to explicitly teach verification, reasoning, and epistemic humility.

Job mix shifts. Routine roles shrink, while judgment-heavy roles grow in importance.

Open questions leaders must resolve

How do we define a “dynamic deal” when inputs constantly change?

How do we maintain quality when training data is increasingly polluted?

Can we design AI systems that productively disagree instead of simply agreeing?

And how do we preserve apprenticeship models when grunt work disappears?

An action checklist for founders and team leads

Codify verification gates, especially for red-flag domains.

Institutionalise reflection latency before high-impact decisions.

Standardise prompts, sourcing, and relevance compression.

Train teams against confidence bias with structured counter-arguments.

Redesign talent pipelines with supervised reps and manual rotations.

Set clear agent guardrails and preserve human access.

Protect authentic voice: write first, let AI edit.

The bottom line: In an era of agentic acceleration, clarity is the scarce resource. Leaders who wire AI into real workflows, verify reality with experts, and scale authentic decision‑making will outpace rivals stuck in pilot purgatory.

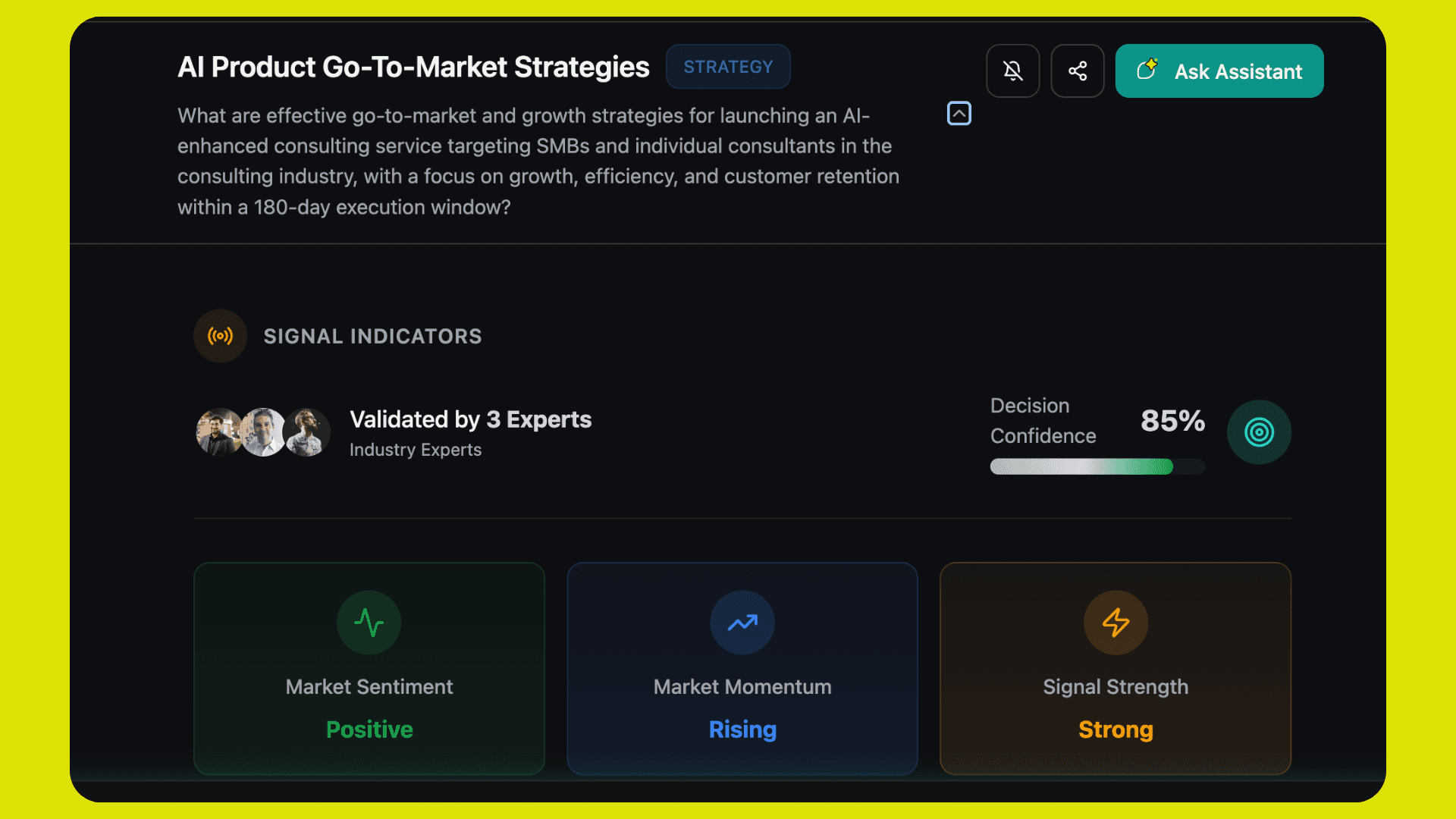

New: We’re launching Wizly’s Decision Dashboard, a verification‑first upgrade that turns expert knowledge and AI outputs into concise decision packets. It’s designed to operationalise trust: faster orientation from AI, final judgment by experts, and clear accountability for every decision. Our early access is officially open - try it here.

Turn Wisdom into Velocity

© 2025 Wizly Corp Pte Ltd. "Wizly" and the Wizly logo are registered trademarks of the company.